Introduction

In the rapidly evolving world of Generative AI, creating images from text prompts has become easier than ever. However, many users find that automated systems often fall short of capturing the nuances of their creative vision. Enter PASTA, or Preference Adaptive and Sequential Text-to-image Agent, developed by researchers at Google. This innovative approach emphasizes collaboration between users and AI, ultimately refining the image generation process to better align with individual preferences.

Understanding the Limitations of Current Text-to-Image Models

Text-to-image (T2I) models have shown impressive capabilities, but they typically fail when it comes to understanding and executing specific creative intents. Many users find themselves in a frustrating cycle of tweaking prompts in hopes of achieving their desired results. PASTA aims to shift this experience by facilitating a dynamic, iterative dialogue between the user and the AI, improving the chances of a satisfactory outcome.

Introducing PASTA: A New Collaborative Agent

PASTA is a reinforcement learning (RL) agent that adapts to user preferences over time. Unlike traditional models that require one-time prompts, PASTA engages users in a conversation to progressively refine the initial image output. By combining human evaluations with a dataset of sequential preferences, researchers have demonstrated that PASTA consistently delivers images rated as more satisfying than traditional models. The foundational dataset used comprises over 7,000 human interactions, establishing a robust baseline for training.

How PASTA Works: User Simulations and Interaction

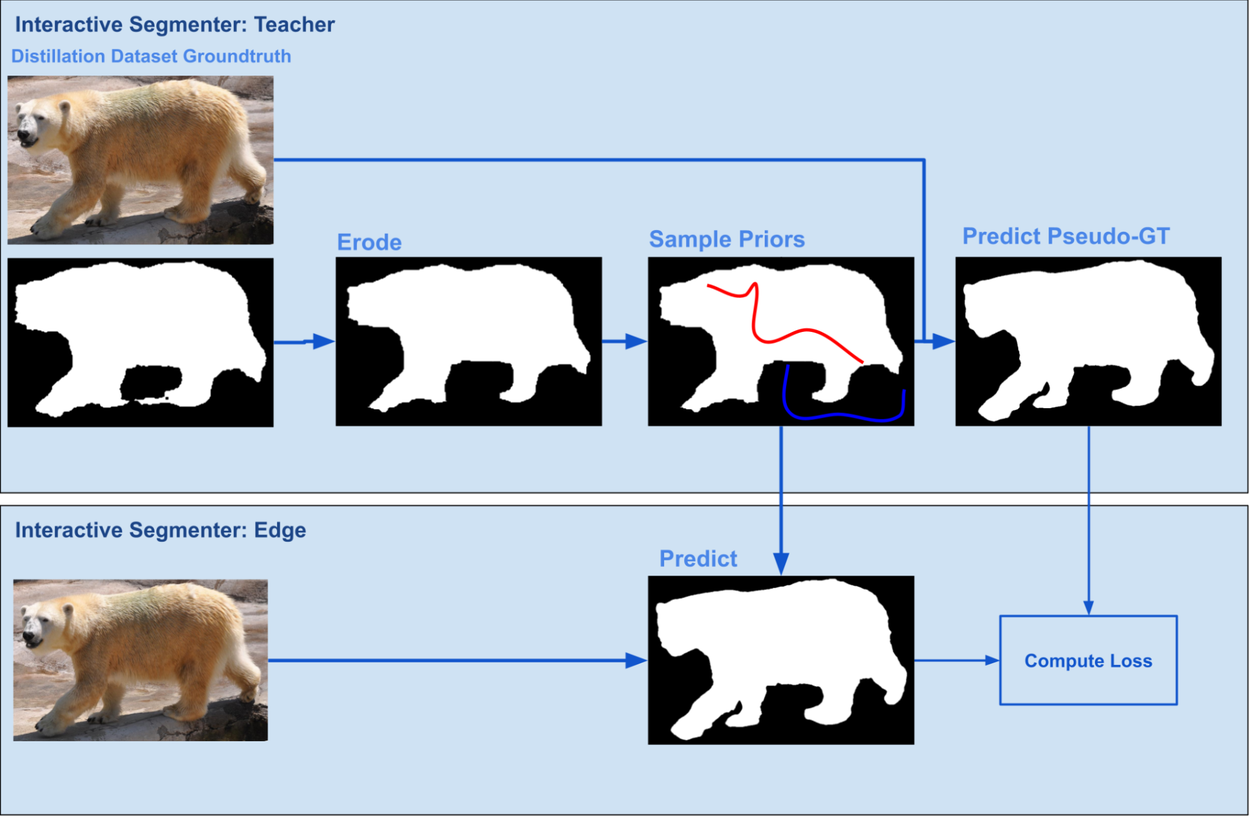

The unique strength of PASTA lies in its ability to learn from user preferences effectively. To gather the required interaction data, researchers employed a two-stage approach integrating real human feedback and large-scale simulations:

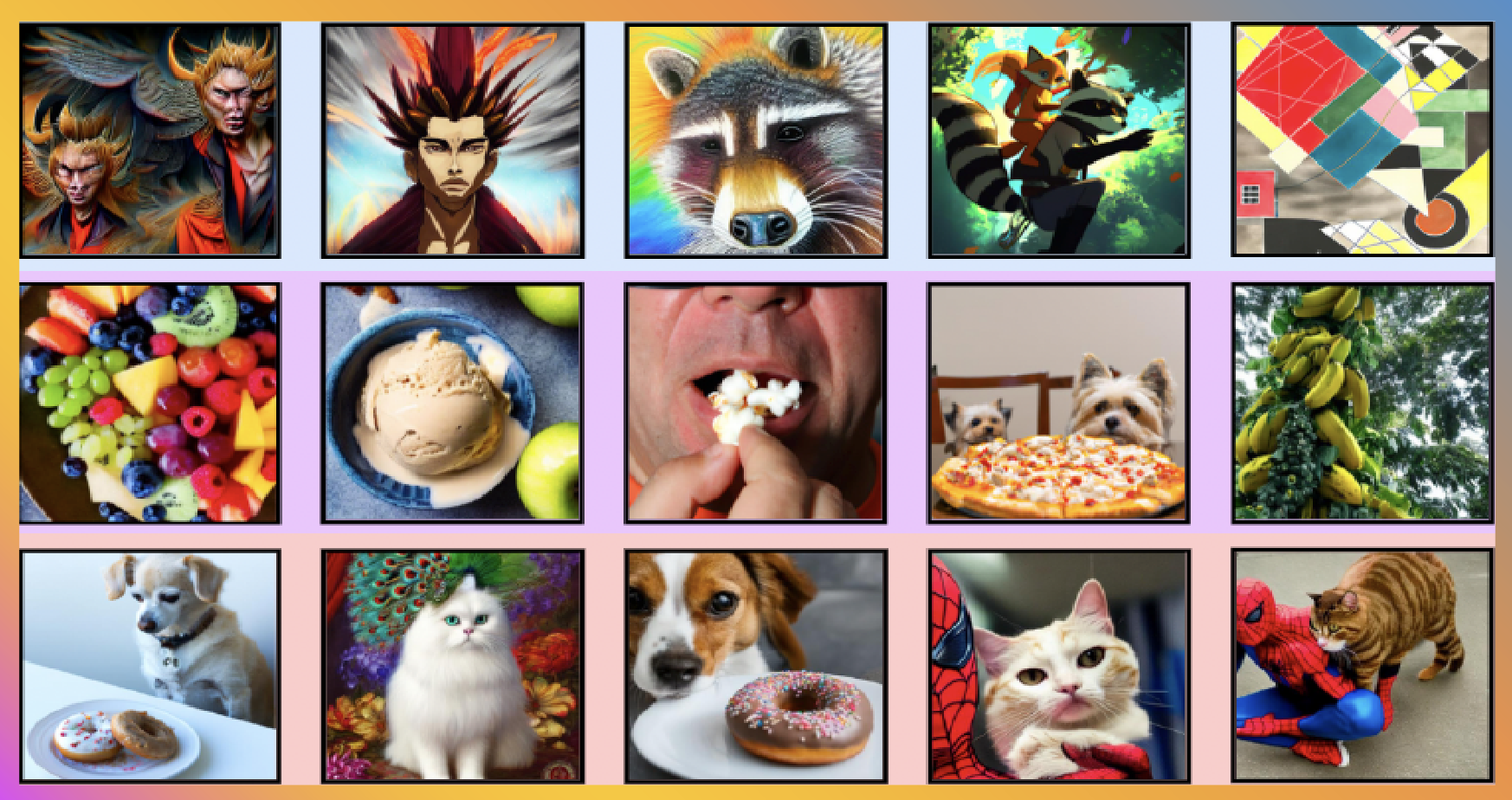

– High-Quality Dataset Creation: The team developed a foundational dataset containing prompt expansions and images generated by advanced T2I models such as Gemini Flash and Stable Diffusion XL.

– User Simulator Training: The user simulator leverages the foundational dataset to mimic human choices, facilitating a vast exploration of user preferences. It divides user profiles into clusters by identifying common tastes, allowing PASTA to better adapt to individual needs.

During user engagement, PASTA first generates multiple potential prompt expansions using a multimodal model. It then selects the most promising options to present to the user, who picks their preferred image, refining the iterative process of image creation.

Testing and Evaluating PASTA’s Performance

To gauge the effectiveness of PASTA, researchers conducted a series of evaluations against a baseline model. They tested three configurations of the agent:

1. Trained solely on real data.

2. Trained only on simulated data.

3. Trained on a blend of both.

The results revealed that while agents relying solely on either real or simulated data were not significantly better than the baseline, the combination of both yielded superior outcomes. Impressively, 85% of human raters preferred images generated by PASTA over the baseline, highlighting its adaptability in creative styles.

Conclusion and Future Implications

PASTA signals a promising future where generative AI becomes more collaborative, personalized, and user-centric. Researchers at Google are excited about the potential applications of their methods in various generative tasks beyond image creation.

For those interested in advancing this field, the team has open-sourced the sequential rater dataset and simulated user data, inviting the community to innovate further. With PASTA, the bridge between human creativity and AI capabilities is not just a dream but an emerging reality.